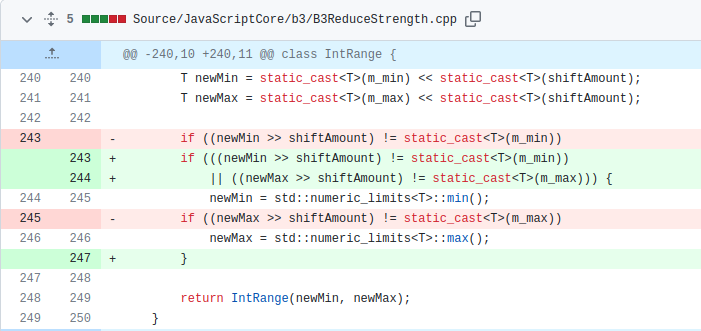

Most steps are the same except for the last line: the CheckAdd operation was reduced to a simple Add operation, which lacks overflow checks during codegen. This substitution should not have happened as this operation can theoretically overflow and hence should require overflow checks. Therefore, based on this IR we can see that the bug is triggered.

Due to the incorrect range computation in the shl() function, the CheckAdd node incorrectly determines that the subtraction operation cannot overflow and drops the overflow checks to convert the node into an ordinary Add node. This can lead to an integer overflow vulnerability in the generated code. This gives us a way to convert the range overflow into an actual integer overflow in the JIT-ed code. Next, we will see how this can be leveraged to get a controlled out-of-bounds read/write on the JavaScriptCore heap.

Exploitation

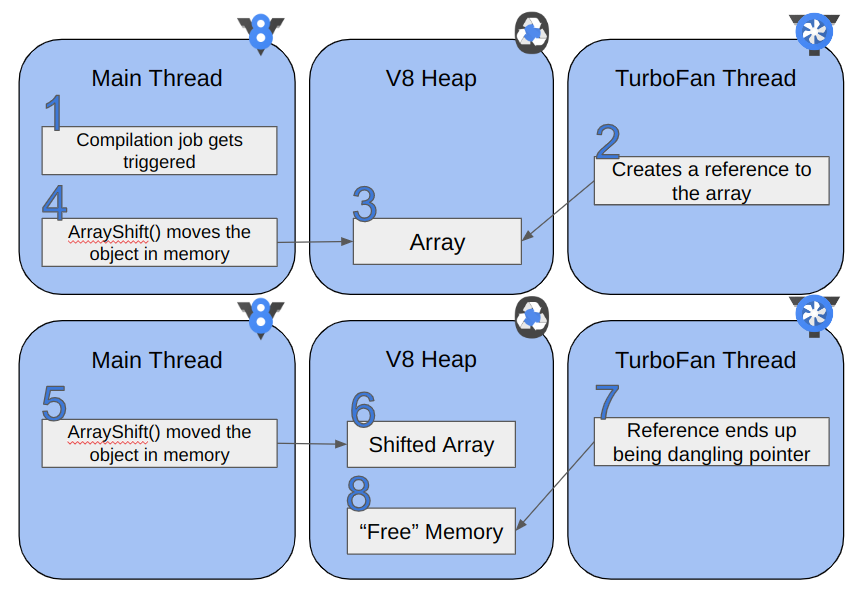

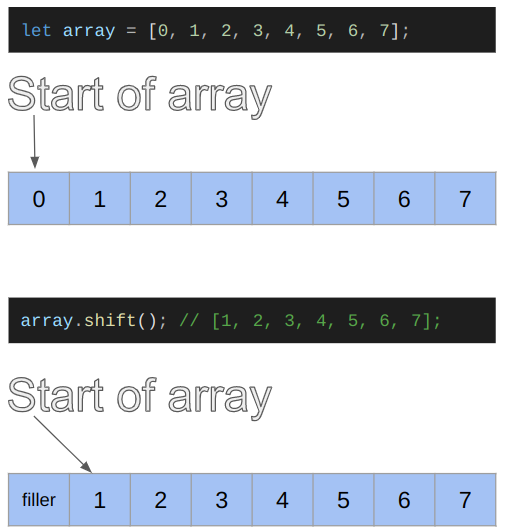

To exploit this bug, we first try to convert the possible integer overflow into an out-of-bounds read/write on a JavaScript Array. After we get an out-of-bounds read/write, we create the addrof and fakeobj primitives. We need some knowledge of how objects are represented in JavaScriptCore. However, this has already been covered in detail by many others, so we will skip it for this post. If you are unfamiliar with object representation in JSC, we urge you to check out LiveOverflow’s excellent blogs on WebKit and the “Attacking JavaScript Engines” Phrack article by Samuel Groß.

We start by covering some concepts on the DFG.

DFG Relationships

In this section, we dive deeper into how DFG infers range information for nodes. It is not necessary to understand the bug, but it allows for a deeper understanding of the concept. If you do not feel like diving too deep, then feel free to skip to the next section. You will still be able to understand the rest of the post.

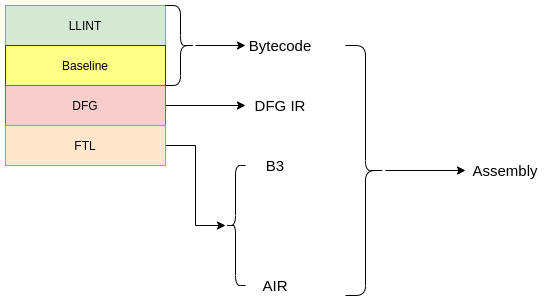

As mentioned before, JSC has 3 JIT compilers: the baseline JIT, the DFG JIT, and the FTL JIT. We saw that this vulnerability lies in the FTL JIT code and occurs after the DFG optimizations are run. Since the incorrect range is only used to reduce the “checked” version of Add, Sub and Mul nodes and never used anywhere else, there is no way of eliminating a bounds check in this phase. Thus it is necessary to look into the DFG IR phases, which take place prior to the code being lowered to B3 IR, for ways to remove bounds checks.

An interesting phase for the DFG IR is the Integer Range Optimization Phase (WebKit/Source/JavaScriptCore/dfg/DFGIntegerRangeOptimizationPhase.cpp), which attempts to optimize certain instructions based on the range of their input operands. Essentially, this phase is only executed in the FTL compiler and not in the DFG compiler, but since it operates on the DFG IR, we refer to this as a DFG phase. This phase can be considered analogous to the “Typer phase” in Turbofan, the Chrome JIT compiler, or the “Range Analysis Phase” in IonMonkey, the Firefox JIT compiler. The Integer Range Optimization Phase is fairly complex overall, therefore only details relevant to this exploit are discussed here.

In the Integer Range Optimization phase, the range of a variety of nodes are computed in terms of Relationship class objects. To clarify how the Relationship objects work, let @a, @b, and @c be nodes in the IR. If @a is less than @b, it is represented in the Relationship object as @a < @b + 0. Now, this phase may encounter another operation on the node @a, which results in the relationship @a > @c + 5. The phase keeps track of all such relationships, and the final relationship is computed by a logical and of all the intermediate relationships. Thus, in the above case, the final result would be @a > @c + 5 && @a < @b + 0.

In the case of the CheckInBounds node, if the relationship of the index is greater than zero and less than the length, then the CheckInBounds node is eliminated. The following snippet highlights this.